How to read Secret from Jenkins Secret

Introduction –

In Jenkins “Jenkins Secret” is used to store user credentials and similar secrets, like API keys, access tokens, or just usernames and passwords used in Jenkins pipeline or jobs.

Jenkins uses AES to encrypt and protect secrets, credentials, and their respective encryption keys. These encryption keys are stored in $JENKINS_HOME/secrets/ along with the master key used to protect said keys.

How to Add Secrets in Jenkins Secrets (JS) –

Login to Jenkins UI, then go to “Manage Jenkins“….

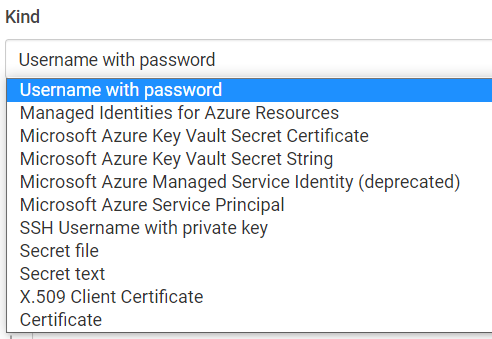

There are different types of secrets can be created in Jenkins Secrets as mentioned below…

Secrets in Jenkins has different type of scopes as mentioned below….

How to Read Jenkins Secrets from Jenkins pipeline or job –

Credentials Binding Plugin –

Ref – https://www.jenkins.io/doc/pipeline/steps/credentials-binding/

“Credentials Binding Plugin” plugin can be used to read secrets from Jenkins secrets and used in groovy pipeline. Also, this plugin mask secrets while printing in pipeline mask it for security!

1) Read Jenkins Secrets of Type “Secret text” –

Ref- https://github.com/arunbagul/jenkins-groovy/blob/main/Read-Jenkins-Secret-of-Type-Secret-text.groovy

//Read Jenkins Secret of Type "Secret text"

//version 1.0

//Plugin

//credentials-binding ~ https://www.jenkins.io/doc/pipeline/steps/credentials-binding/

node('master') {

withCredentials([string(credentialsId: 'JS_SECRET_ID' , variable: 'password_from_js')]) {

println "Secret from Jenkins Secret: ${password_from_js}"

}

}2) Read Jenkins Secrets of Type “Username with password” –

//Read Jenkins Secret of Type "Username with password"

//version 1.0

//Plugin

//credentials-binding ~ https://www.jenkins.io/doc/pipeline/steps/credentials-binding/

node('master') {

withCredentials([usernamePassword(credentialsId: GIT_TOKEN_JS_ID, usernameVariable: 'gitUserName', passwordVariable: 'github_token')]) {

println "UserName/Password from Jenkins Username: ${gitUserName} , Password: ${github_token}"

}

}

3) Howto assign Jenkins Secrets to Global variable –

//Howto assign Jenkins Secret to global variable

//version 1.0

//Plugin

//credentials-binding ~ https://www.jenkins.io/doc/pipeline/steps/credentials-binding/

//get secret from jenkins-secret

node('master') {

withCredentials([usernamePassword(credentialsId: GIT_TOKEN_JS_ID, usernameVariable: 'gitUserName',passwordVariable: 'gtoken')]) {

env.GIT_TOKEN = gtoken

}

println "GIT_TOKEN = ${env.GIT_TOKEN}"

}4) How to retrieve (recover) secret from “Jenkins Secret” –

Sometimes we need to read secrets from Jenkins and use in different stages in pipeline. It is always recommended to use “withCredentials {}” scope at. But to simplify we can

//How to retrieve (recover) secret from "Jenkins Secret"

//version 1.0

//Plugin

//credentials-binding ~ https://www.jenkins.io/doc/pipeline/steps/credentials-binding

node('master') {

withCredentials([string(credentialsId: 'JS_SECRET_ID' , variable: 'password_from_js')]) {

shell_output = sh(script: """ echo ${password_from_js} > write_to_file """, returnStdout: true ).trim()

}

}

Ref –

https://www.jenkins.io/doc/developer/security/secrets

https://www.jenkins.io/doc/pipeline/steps/credentials-binding

Thank you,

Arun Bagul